Key Points

Key Takeaways

- 1

A comprehensive guide to 'GLM-4.7-Flash Review: The Ultra-Fast, Ultra-Cheap, High-Performance Price-Disruption in 2026, focusing on implementation and best practices.

- 2

Technical deep dive into the architecture, tools, and ecosystem that define 'GLM-4.7-Flash Review: The Ultra-Fast, Ultra-Cheap, High-Performance Price-Disruption.

- 3

Strategic insights and actionable advice for developers mastering 'GLM-4.7-Flash Review: The Ultra-Fast, Ultra-Cheap, High-Performance Price-Disruption in the modern era.

In January 2026, another shock hit the AI industry. Zhipu AI (智譜AI) in China announced GLM-4.7-Flash.

True to the name Flash, its biggest feature is overwhelming generation speed . But it is not just fast. The price is surprisingly low, and performance is more than practical. It has rapidly risen as a strong rival to GPT-4o-mini and Gemini 1.5 Flash, long known as the go-to for cheap and fast.

This time, we will thoroughly dissect the real power of GLM-4.7-Flash through benchmarks and real use cases.

Spec Comparison: The Shock of Price Disruption

| Item | GLM-4.7-Flash | GPT-4o-mini | Gemini 1.5 Flash | Claude 3.5 Haiku |

|---|---|---|---|---|

| Input price ($/1M) | $0.05 | $0.15 | $0.075 | $0.25 |

| Output price ($/1M) | $0.15 | $0.60 | $0.30 | $1.25 |

| Max context | 128k | 128k | 1M | 200k |

| Japanese performance | Very high | High | Average | High |

| Inference speed (TPS) | 180+ | 120 | 150 | 100 |

The input token price is one-third of GPT-4o-mini. Even if you feed it one million tokens (about 10 paperback books), it costs only 7 to 8 yen. The hurdle for individual developers to “just try it” has completely disappeared.

Benchmark: Speed Is Justice

We measured real API response speed, assuming a typical RAG (retrieval-augmented generation) summarization task.

Generation Speed (Tokens Per Second)

GLM-4.7-Flash consistently hits around 180 TPS (Tokens Per Second) . In Japanese character count terms, that is the speed of “about 200 to 300 characters per second.” It does not give users even a moment to feel they are waiting. It is the best answer for chatbots that require real-time responsiveness and for processing large volumes of documents.

Implementation Example: Using the Python SDK

Zhipu AI’s SDK includes an OpenAI-compatible mode, making migration very smooth.

from zhipuai import ZhipuAI

client = ZhipuAI(api_key="your_api_key")

response = client.chat.completions.create(

model="glm-4.7-flash",

messages=[

{"role": "user", "content": "Explain how quantum computers work in 3 lines"}

],

stream=True

)

for chunk in response:

print(chunk.choices[0].delta.content or "", end="")Real-World Feel: How Is the Japanese?

Worried that ‘a China-made model might be weird in Japanese? No need. Japanese ability has improved dramatically since the previous GLM-4 version, and in 4.7, honorifics and context handling feel very natural.

The stability of JSON mode is especially high, and it is less prone to unwanted format errors, which is a welcome point for developers.

Recommended Use Cases

- Real-time summarization of news articles : Ultra-fast and ultra-cheap, so you can push entire RSS feeds without hurting your wallet.

- Internal Q&A bots : Perfect for the “Generative” part that composes answers from RAG search results.

- Data cleansing : Handling volume-heavy tasks like name normalization and structuring unstructured data.

Conclusion: Escape Subscription Poverty

There is no need to use the strongest models (GPT-5 or Claude 3.7 Opus) for tasks that are about 80% of the total. Everything should be offloaded to GLM-4.7-Flash.

Use the savings to spend more on a ‘serious model’ when it really counts. That is the smart way to run AI in 2026.

Must-read

From model selection to cache strategies and prompt compression. Packed with practical techniques to prevent API bankruptcy.

![[2026 Definitive Guide] 4K 27-Inch Monitor Comparison | The Top 3 for Work](/images/4k-monitor-comparison-2026.jpg)

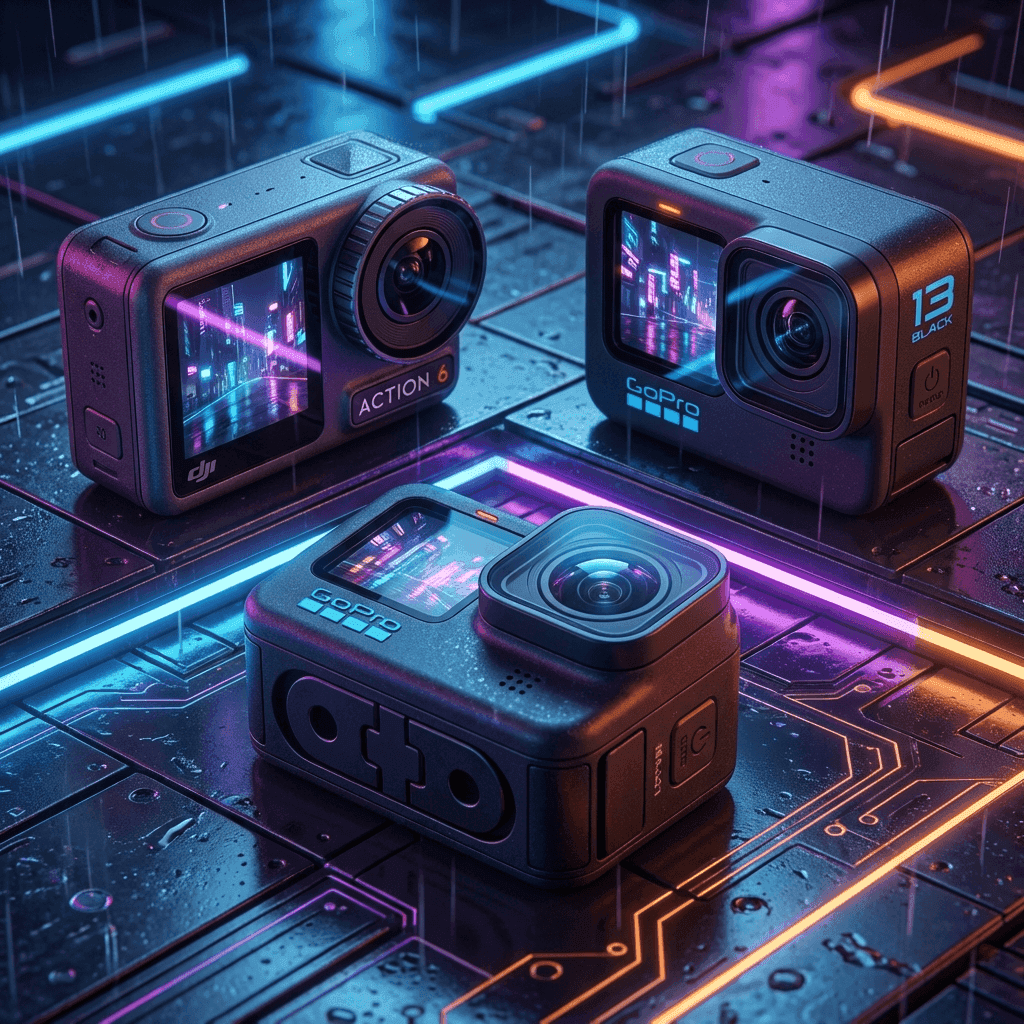

![[2026] Action Camera Showdown: GoPro Hero 14 vs Insta360 Ace Pro 2](/images/action-cameras-2026.jpg)

⚠️ コメントのルール

※違反コメントはAIおよび管理者により予告なく削除されます

まだコメントがありません。最初のコメントを投稿しましょう!