Worried about cloud API costs? Hesitant to send private data to OpenAI?

In 2026, many engineers are starting to return to on-premise AI. High-performance models in the 70B (70 billion parameter) class can now run at practical speeds on home hardware.

This time, we compare the two mainstreams for bringing the strongest AI to your home.

1. Mac Studio (M5 Ultra): The Eco-Monster of Inference

The unified memory structure of Apple Silicon is a kind of cheat for AI. Since memory is shared by the CPU and GPU, you can easily exceed the VRAM wall (the 24GB wall for NVIDIA).

Even the full-precision Llama 3 70B, without quantization, fits easily in memory. Whats more, power consumption is equivalent to a few light bulbs. Even when running 24 hours a day, the electricity cost is negligible.

2. Custom PC (RTX 5090): Strength is Power

On the other hand, if you want to perform LoRA (fine-tuning) as well as inference, you still need an NVIDIA GPU with CUDA cores. By installing two RTX 5090s (32GB VRAM), you get 64GB of VRAM space.

watch -n 1 nvidia-smi

# GPU 0: RTX 5090 (32GB) - Usage: 98%

# GPU 1: RTX 5090 (32GB) - Usage: 95%

# Power: 900W / Temp: 82CHowever, as you can see, this comes with power consumption that could trip the circuit breaker and heat generation on par with a heating appliance.

Cost and Performance Comparison

| Item | Mac Studio (M5 Ultra) | Custom PC (RTX 5090 x2) |

|---|---|---|

| Memory (VRAM) | 128GB (Unified) | 64GB (32GB x2) |

| Inference Speed | Fast | Blazing Fast |

| Training Ability | Weak | Strongest |

| Electricity Cost | Low | High |

| Price | Approx. $5,500 | Approx. $8,000 |

Conclusion

- If you want a 24/7 assistant : Mac Studio

- If you want to train your own models : Custom PC

Inference King

Quiet, energy-efficient, large memory. There is no hardware as sophisticated as this for a home AI server.

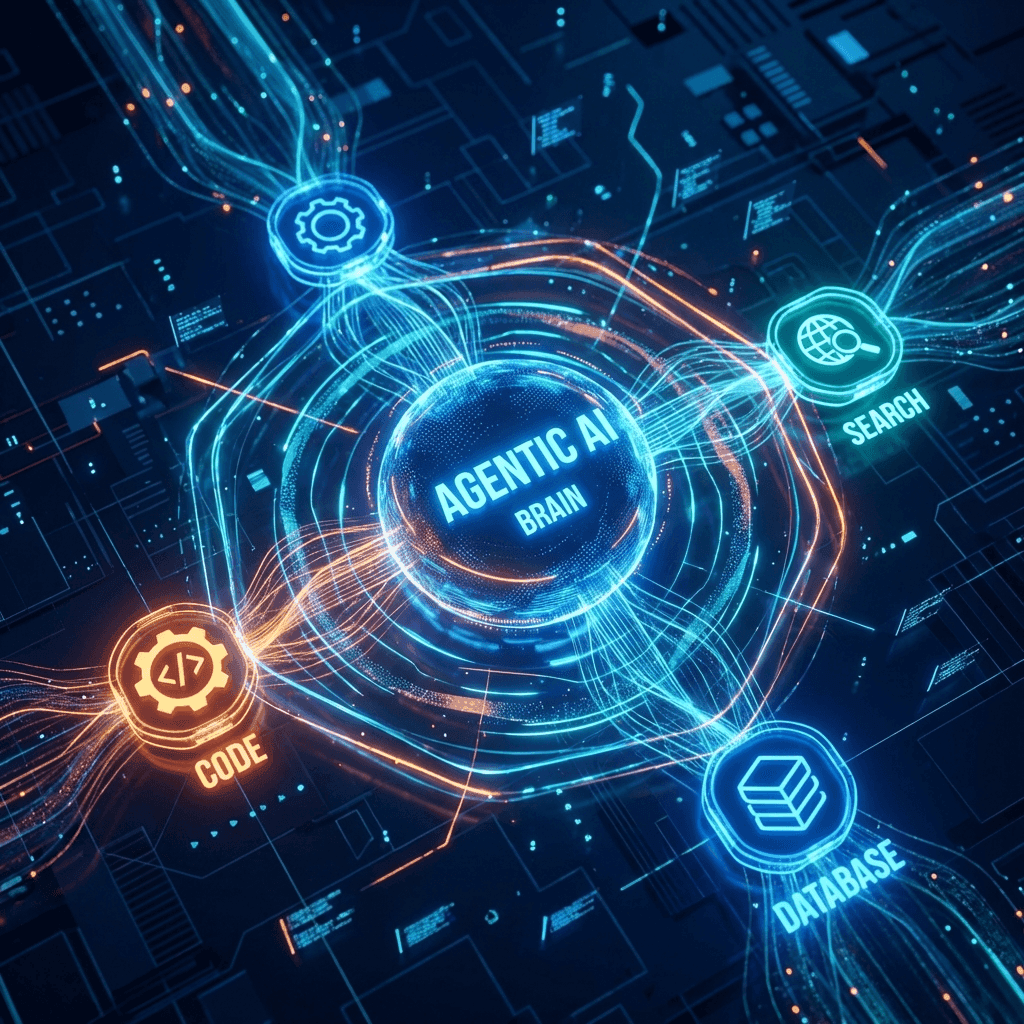

![[2026 Latest] Strongest AI Coding Tool Comparison: Who Wins the Agentic AI Era?](/images/ai-coding-tools-2026.jpg)

⚠️ コメントのルール

※違反コメントはAIおよび管理者により予告なく削除されます

まだコメントがありません。最初のコメントを投稿しましょう!